(4月2日注:经提醒,我对10年和11年NOIP满分统计的回忆并不准确。不过我想,就我要表达的意思而言,这个区别没有本质上的重要性。我并没有查证并改正。不想再回忆那时的事了。)

(4月1日注:经吴争锴本人提醒我把他的名字打错了,已改正。另,依当事人的要求,删去了批评一个同年的phd数学能力差的部分)

16年我刚刚读phd的时候,有段时间经常会写写自己的生活。因为初到美国很多事情都很新鲜,而且读phd也是个特别的人生阶段,想记录下来为今后的自己留下一点什么。但只有半年就停了。因为17年初我找到了想做的问题,打算集中精力先把我的第一个项目做好,等到发了第一篇paper再写也不迟。

希望能早一点完成。因为人生短暂,没有时间可以浪费。

不幸的是,我让自己失望了。一转眼,三年过去了,我独立写过4篇paper,外加作为一作参与了一个项目,一共投稿10次,然而就是一篇也没能发表。一无所成,获得的只有无尽的痛苦。何以走到这一步呢?答案大概是有的。然而我一贯不擅长给事情下结论,因此还是讲讲故事吧。

尽管许多年已经过去了,从某种意义上,从我高三的后一半开始,跨越本科四年,直到读博三年半至今,都是我作为OIer的最后的日子的延续。

假如以升学目标来划分,国内的中学生,无论是靠高考还是竞赛还是什么别的途径,大概是可以分为几档的。很大的一部分,清华北大对他们来说是遥不可及的。往上,有一些人,清华北大是他们的终极目标。再往上,有少数的人,清华北大是他们的最低标准。参加学科竞赛的人中,大概也是前两种占多数。然而学科竞赛究竟是一种奢侈,是第三种人的事业。他们参加竞赛是为了竞赛自身而不是为了升学,只要能发挥自身实力,获得保送是可期望的结果,只有竞赛自身的荣誉是需要争夺的。对于前两种人来说,处于一个不出意外就能保送的位置上,大概可以说是天堂了吧。然而对于第三种人中的某一些,这是个不折不扣的炼狱。我便是把自己归于这一类的。

我曾经是个很有前途的竞赛生。六年级开始参加NOIP,后来各种数学竞赛也有涉猎,从初二开始差不多每过几个月就要在校报头版出现一回。初三,我第一次获得NOIP提高组一等奖。高中联赛一等奖也就意味着保送考试资格。很多竞赛生便是到了高三也没有拿到过。然而我列举这些,却没有吹嘘的意思,因为以取得保送考试资格为目标的人才把高中联赛一等奖当作一项成绩,对于要到国赛一争高下的人,无非是玩一玩罢了。

09年,初中毕业的暑假,我第一次参加NOI。当时我刚刚在毕业考试中取得全班第一,正是个意气风发的少年。那一年我的成绩并不好,连个铜牌的渣也没得到。不过这并不要紧,毕竟我只是个夏令营选手,主要目的是参观学习,当时连最大流的增广路算法都没有写过——否则不至于连植物大战僵尸(第二天第一题)都苦手。然而令人不安的迹象已经显现了。那一年,与我同一届的张放获得了金牌。

张放是个令人印象深刻的人。说他好玩大概不过分——是聪明但有点天然的那种,不是愚蠢的那种。他上去讲描边(第二天第三题)的时候,有个测试点画出来是个“80中”(那年的比赛在北京80中),结果他说是个“日口中”。他常常把自己的解法形容为“瞎搞”。然而实力是毋庸置疑的。

我隐隐感到我和他之间存在着巨大的鸿沟。

我中学常读数学科普书,见识过不少天才,也很明白自己不是一个天才——大概与普通人相比还是很聪明的,但在聪明人里就很普通了。然而对此没什么实感,毕竟假如把自己与伽罗瓦那样的上古神兽相比的话,无论是时间空间还是实力相差都极大,实在是什么也比不出来的。然而此时此刻,面对一个将来要同场竞技的人,实力的差距就突然变得无比真实了。此前通向未知的充满无限可能的学科竞赛的道路,此刻似乎显出了尽头。

之后高中生活开始了。NOIP2009,我取得全省第二,而第一的是个高三党。10年初,顺利通过省选。之后的一年大概是我的OI生涯的高峰了。NOI2010,成绩比预想的好,排在第30多名,甚至AC了一道全场只有10人做出的题(海拔,第一天第三题)。中科大在现场负责招生的老师找到我,说少院有个项目,如果我有兴趣的话当年就可以入学。但我是一心想去清华的,便谢绝了。

然而此后我却没能在国赛上再前进一步。至于原因,我在高三的时候反思过很久,但终于没有给出答案。是我不够努力吗?扪心自问的话,我确实是没有把所有时间有效利用的。刷题不够。有用的知识点没有掌握。但我看不出怎样的努力能让我在初三甚至初二就获得NOI金牌。

我是个不太相信奋斗的人。本科之前我是个唯天赋论者。即使现在,对于奋斗能对我所在乎的事情产生多大的影响,我仍然是十分怀疑的。我中学的时候逍遥自在,不愿意在课业上多花精力,连作业都懒得写。现在回想起来,真不知道当时是哪来的自信。我初一的时候成绩并不好,大概是因为别的同学为了考进东北育才常年补课而我小学一直在放羊,六年级以参加竞赛为借口甚至很少在班里上课。由于不好好努力,初一的时候我也没少被批评,甚至被我妈揍了一顿——我从小几乎是没挨过打的。

然而我厚着脸皮死也不改。到了初二下学期,我的成绩已经稳定在班级前10了,数学更是几乎无争议的第一。作业自然仍是不写,老师们终究拿我没有办法。到了初三,就在NOIP2008获得省一之后的一个月,在升高中的分流考试上,我第一次考了全班第一。同学们——他们的家长们更甚——视我为天才。我却心情复杂。

我的同学们也绝非等闲之辈。东北育才是辽宁数一数二的中学,我的同学们是全市成绩最优秀的同龄人。我竟如此轻易的在这里鹤立鸡群了。一方面,这使我十分愧疚。这样的只靠很少的努力获得的成功,似乎是某种不正当的偏得。另一方面,这使我相信,天赋的差距是极难在后天弥补的。

然而,奋斗却似乎又的确是有用的,毕竟在像雅礼或者人大附那样的学校,金牌选手可以被成批地培养出来。不可能人人都是天才的。

不过,金牌与金牌也是有分别的吧!正如有些人把清华北大当作最高目标而有的人把清华北大当作最低标准一样。靠多年的练习与积累,可能又恰好遇到自己擅长的题目,惊险地越过分数线的金牌,与随手秒杀全场的金牌,终究是不同的。前者对于个人或许更是一个来之不易的因而值得喜悦的成就,却永远没有后者那般耀眼。

越靠近才能分布的顶端,人与人的差距就越是大到令人绝望。不必到OI的历史书里去找楼教主——就在此时此刻,NOI2010的赛场上,在我摸爬滚打不得要领的时候,像比我还小一届的范浩强已经十分受人瞩目了。

再往上,还有连与之同场竞技的机会都没有的传说中的选手,比如tourist

再往上,还有学科竞赛选手出身的神一样的人,比如陶哲轩

……

我或许有可能通过努力获得金牌,但即便如此,也永远只是作为天才们的陪衬吧。

到了高二。NOIP2010,我获得了满分。这一年全国也只有16个满分而已。高兴是自然的,但日子仍是在自我怀疑之中一天天变得沉闷起来。初中时那种毫无顾虑的冲劲再也找不到了。能力的增长也遇到了现实的困难:没有人教我。OI涉及的知识极繁杂,更新又很快。很多没有系统的学习资料的内容,就要靠口耳相传。如果没有个实力强的教练的话,就要靠师兄带师弟了。我们这里却没这个条件。邱老师是个名教练,但她带出IOI选手已经是十年前的事了,NOI的难度早已今非昔比。至于师兄,我们的上一块NOI金牌也起码有四五年了。如今我连个水平相当可以共同进步的同学也没有。而我,既不是一点就通的天才,作为一个长期自由散漫的中学生,也与理想中的可以自己发现问题自己研究自己寻找学习资料自己练习的竞赛选手还是有差距的。

2011年的冬令营,排名第19。此后便是下坡路了。

7月初,清华举办了一个信息学竞赛夏令营。这种事往年是没有的,然而那年正是各大高校抢生源抢红了眼的时候。到达报道现场,众大牛已经聚集在此互相orz了。见面互相做膜拜状大概是学科竞赛界上流圈子里基本的礼节。陈立杰见到我的胸牌,上来便拜。我是从来没有受过这么高的礼遇的。他当时已经是OI界的大红人了——起码在NOIP贴吧上是如此。想必他是见过NOIP2010满分选手的名单了。自然,他也是满分。

我心里苦笑。这实在不是一件可以当作荣誉的事。在我的世界观中,总是存在着成功者和觊觎者的,而互相膜拜、互相吹捧、在对方面前装弱,无非是成功者之间的俗套的社交礼节,并不是真心实意的表达对对方的敬服的方式。一个成功者在其他成功者面前对一个一时取得好成绩的觊觎者如此做,便有着揶揄的意味了,而一个觊觎者对一个成功者如此做,便是在套近乎,甚至有些可鄙了。于是我没有任何反应。他也并不在意,又回去和其他大牛谈笑风生了。

夏令营的内容包括参观CERNET2的中心机房,还有姚期智先生的讲座。不过大家自然不是为了来这见识清华有多牛逼的。在比赛中取得好成绩、获得录取的优势是唯一的目的。我考得大概不差,但也只有获得预录取资格而已。至于陈立杰直接获得录取,我只有眼红的份了。

预录取协议的录取条件是在NOI2011取得前60名。继续备战。然而我的状态并不稳定,把往年NOI的题目拿来做,也只是勉强达到金牌分数线而已,实在是没有十足的把握。

NOI2011。第一天,发挥不太好。这样下去要跪了。第二天,我压力很大。第一题(道路修建)是个水题,dfs就好了,我迅速搞定去抠后面的题。然而坑就出在这——数据范围很大,直接用递归做dfs的话会爆栈。我因此丢了30分。最后的成绩,我全国排名勉强进入了前60,与金牌失之交臂,离分数线只差了20多分。

残念的结果。然而能在第二天挽回第一天的败局获得清华的录取,大概也算惨胜吧。第二天公布名次之前,邱老师问我如果没有获得清华的录取我打算怎样,是接受次一档的学校(对不起了上海交大)还是回去准备保送生考试。当时我已感到力不从心,只想赶快结束一切,不想再做纠缠。我想,都是天意吧,假如没有过线,大概以我的实力确实是不该上清华的,为何还要费力挣扎呢?然而我也知道,如果没去清华,我大概会自责一辈子。

幸而那样的情况没有发生。晚上,清华招生组前排起了长队。达到录取条件的中学生们和他们的家长老师拿着预录取协议等待发落——虽然按照预录取协议,过线即可保证录取,但招生组有决定去哪个系的权力。气氛有点沉闷。同是录取到清华,但果然还是几家欢乐几家忧。我搭了末班车,自然是没有谈判的筹码,服从分配被录取到了软件学院。

不爽。听着像技校。能去贵系就好了。然而又没有不爽的资本。压线获得录取,感觉就像是得了清华的施舍。往年,清华是非金牌不录的,今年如果不是和北大抢得厉害,断不会有此等便宜事。

我父母很高兴。邱老师……大概不会很满意。但又送了一个学生进清华,算是了却一桩事吧。只有我像遭受了什么巨大的失败一样。学OI六年,在离胜利近在咫尺的时候,终于功亏一篑。那个常出现在校报上的学生,终于没能被写进校史。

金牌有那么重要吗?有。因为它承载了太多的东西。学科竞赛就是我的少年时代。少年时代是最美好的时代。即便在中国的基础教育的重压之下,每个人也总还是有一些他所珍视的升学之外的东西。对我来说,那就是学科竞赛了。由于竞赛的成功,我可以跳出应试和升学的圈子,以此为目的而定下的一切规则,我可以不必遵守。在同学们与课本苦斗、重复无用的劳动的时候,我可以四处游历。参加竞赛的这些年,我去过北京、天津、南京、长春、大连、青岛、烟台、常州和三亚,见到了无数的人和事。我在学校可以有一片自己的小天地,可以在那里远离一切纷扰做自己喜欢的事——读phd也不过如此吧。

总之是和同龄人相比很不同的人生。本来是可以写一个好故事的,但却没能迎来圆满的结局。像是人生的意义被否定了一样。

而且,像被赋予某种使命似的,我觉得争夺金牌似乎不是一个我可以拒绝的目标。假如天赋的差距确实不是可以靠后天的努力弥补的,那么人的天赋高低就是一种天生的无法改变的不平等了。没有任何理由地,我生得比我的同学们聪明,可以参加学科竞赛,可以不受应试教育的体制的束缚,可以付出——与高考相比——很少的努力就进入清华。这真是一种极大的不公平了。如果不去努力取得与自己的天赋相应的成就,又怎能无愧于天、无愧于没有这种天赋的同学们呢?

我虽然不是天才,但NOI金牌是在我的天赋所能达到的范围内的。既然把学科竞赛当作自己的事业了,那么没有得到金牌,难道不是失败吗?

我的NOI2011在遗憾中结束了。然而我的OI生涯还没完。我进入国家集训队了。虽然名次上看我的成绩比NOI2010还差,但其实未必没有进步——2011年,NOI选手的名额大幅增加了。像湖南江苏浙江这样的强省是不缺少有实力的选手的,于是国赛获奖的标准也跟着提升了。相应地,国家集训队的名额从20增加到60,于是我进入了集训队。

假如能入选国家队参加IOI,那么自然不必再为没有获得NOI金牌而遗憾。可惜的是此时我对OI的热情已经用尽了。而且从2010到2011,我步履维艰,此时实在看不到有任何能打进全国前4名的希望。只是此时我也确实有不能退役的原因——我得带学弟们和高三竞赛党们备战NOIP2011。于是我的OI生涯凭着惯性又继续了。

结果NOIP2011我又得了满分。这次全国的满分只有10个,而连续两年满分的只有两个——另一个是陈立杰。我无语。为什么偏偏NOI的时候不能完美发挥呢。

之后是国家集训队第一轮集训,在清华。发生了什么事情我早已忘记了,只记得开始的那一天下了雨,而结束的那一天,心情极度郁闷,从天安门一直游荡到积水潭。我的成绩,我记得是第17名。

邱老师觉得努力一下并非没有进国家队的希望。第二轮集训的条件是前12。她提出可以把我送去中山纪念中学的宋新波老师那里训练,甚至可以找唐文斌来给我上课——以她的人脉大概确实是请得动唐总的。但在我看来这毫无意义。当时我已经认定去参加IOI是天才们的事,我的实力差得远,而天赋的差距又不是努力可以弥补的,而如果胜利无望的话,多走一步或者少走一步也并没有什么区别。再继续的话,无非是一边在成绩难以提高的困境中挣扎一边不断提醒自己自己还不够强,实在是一件徒增痛苦的事。平时情绪很少波动的我那一次却哭了。

最后提出的办法是先去参加2012年的冬令营再说。我只当是去给学弟们做陪练了。比赛的前一天,我感冒了。比赛的那一天状态很差。早上到达机房的时候,仍是一群大牛聚集在门口,不过相互问候的礼节竟从“膜拜!膜拜!”变成“仰慕!仰慕!”了。我只记得仰慕的众人中有卓亮——大概是他的形象和声音都很特别吧。至于被仰慕的人——是王钦石?记不得了。那一瞬间我似乎突然厌恶起学科竞赛来,感觉自己奋斗多年仍然默默无闻,而一切在成功者眼中只像笑话一样。

赶快结束吧。那天的比赛之后,我自知与第二轮集训无缘,连自己的成绩也没有查。我的OI生涯,至此惨淡地结束了。

之后是无所事事的高三下学期。四月,和另外几个保送党和出国党去了云南。五月去了台湾。高考之后的七月又去了日本。几次旅行之后,我总算从失落中恢复过来,但内心永远地多了个包袱,似乎总在试图向谁证明什么。然而直到现在一直没有实现,不但没有实现,似乎就连究竟是向谁和证明什么,也没有想明白。

总之,即便承认了自己大概确实不具备金牌选手的实力,但并不是心悦诚服,不愿——被别人或自己——划入失败者的行列。于是便总是在寻找机会挽回败局。方法便是——去姚班。我第一次听说姚班是在2010年,虽然此后对姚班一直非常向往,但至于姚班到底好在哪里,其实我也没有详细了解过。总之很牛逼,去就对了,不用了解——因为此时能去姚班的象征意义已经远远超过了它的实际意义。

鄙视链大概并不十分政治正确。但软院不如姚班和贵系,在我看来这是无可争议的。在我的世界观中,所谓软件学院,就是培养码农的。我好歹是个前集训队选手,怎么能当个区区码农!从签订录取协议的那一刻起,我从来没有想过要留在软院。

而且,抛开鄙视链不谈,我内心是很想做学术的。从幼儿园的时候起,我的梦想就是做个科学家。直到高中毕业,我从来没有想过今后去做什么别的工作。而且最好是数学,又或者是什么其他的理论——能享受纯粹的思维的快乐,在我看来是人生的理想状态。这或许也是为什么我把我的少年时代投入到学科竞赛当中吧!对于一个中学生而言,学科竞赛几乎是他能做的与理论研究最相近的事了。

而这种所谓“理性的愉悦”,又是只有智力超常的人才能享受的奢侈。既然在竞赛中因为自认天赋不足而受挫,那么去做理论研究的梦想便也跟着虚无缥缈起来。假如能去姚班或者最起码是贵系的话,那么今后从事研究还有一线希望,而如果留在软院,就是甘心去工业界而向生活投降了。

于是去清华报到之后的第一件事就是去报名姚班二次招生。先是笔试,然后是面试。我连面试的通知都没有收到。难道我真的那么渣?直到好多天后,和同学们渐渐互相认识了,才从一个参加了面试的同学那里听说他在面试名单上明明是看见了我的名字的。至于为什么没有收到通知,那是永远的谜团了。

总之又是一次打击。然而最让人感到羞辱的还在后面。军训的时候,软院和姚班被编在一个连。姚班是个金牌选手一抓一大把的地方——也就是说OI时代我输给了他们中的很多人,此时不仅差距又拉开了一步,而且要每天被提醒自己的失败。我不敢直视他们。真想逃跑。

然而还没有到绝望的时候。还有一次机会——转系。

转系申请的机会在大一下学期。这次转系很意外地毫无戏剧性地顺利成功了。申请的人只有7个,4个被录取。于是从大二开始,我成为了计科20班的一员。

我终于与当年被我视为比我优秀的成功者的人成了同学。然而“去姚班”这个目标终于实现之后,我却没能卸下包袱。因为虽然同处一个屋檐下,我始终无法把自己和他们归于一类。而且,就连这个我的意识中成功的“他们”到底是谁也搞不清了。

连续两年获得NOIP满分,不算成功。在国家集训队排名第17,不算成功。上了清华,不算成功。如今进了梦寐以求的姚班,似乎仍然不算成功。大概非得在自己所知的范围内成为最优秀的不可吧!但我又确凿无疑地知道这个目标是遥不可及的。

这本是个很简单的道理。如果一个人在他所处的群体里是最优秀的之一,那么在人生的下一个阶段他便可以到一个水平更高的群体中去。这个过程不断重复,直到某一个时候,他的天赋决定了他已经不可能成为自己所在的群体中最优秀的一个了。此时他也就看到了他的成就的上限,任何更高的目标都是注定要失败而不切实际的。除了同时代中最伟大的几个人之外,所有的人无一例外的要遇到这样的时刻,区别只是早晚的问题。

然而理性上可以完全认同这个道理,却不意味着感性上可以接受。目睹他人的成绩,知道自己能力不足,又无法放弃超出自己能力范围的目标。理性和感性不能对同一事物做出一致的判断,这大概是痛苦的一大根源吧!

然而究竟是哪种能力不足呢?同样是因为能力与目标的差距而痛苦,如果说此时与中学时有什么不同的话,大概是隐约知道了问题出在哪吧。姚班有很多理论课程,而我的成绩大多是低空飘过。我发现我并不善于处理没有直观意义的符号序列。我对于一个结论的理解是严重依赖于直观解释的。如果一个结论不能用直观方法快速得到而只能通过对一个符号序列依照某种规则做冗长的变换,那么我很容易失去耐性,即使可以验证每一步推导都是正确的,我仍然会觉得并没有懂,也不知道要如何使用。

不能对处理抽象的符号,我认为对于理论研究而言这个缺陷是致命的。我不知道这项能力是否可以通过后天的训练来提高,但即便能,此时我的内心已经被那个巨大的包袱占据,想找回中学时心无杂念的学习状态再也不可能了。我从事理论研究的机会大概已经永远失去了。

做了这样的结论之后,我变得越来越消极起来。姚班是有很多资源的,包括参与科研和出国交换的机会,而所有的机会我都没有争取。我总觉得那样的机会是轮不到自己的。而且我第一次感到迷茫。由于我从小想做一个科学家,以做学术为目标已经成为我的潜意识的一部分。此时,未来从事研究,起码是理论研究,看来是不可能的了,我才终于意识到其实我从来没有认真思考过自己想做什么。

事实上,就连学计算机也并不是我有意识的选择。我小学计算机课的老师想组织学生参加NOIP。我接触计算机比较早——大概不晚于四岁——虽然没有学过编程,操作还是很熟练的。她问我有没有兴趣。小学的课程对我很简单,我又没有别的事可做,于是我答应了。由于竞赛的成功,一直学下去似乎是一个很自然的不需要思考的决定。于是我就这样学计算机一直到今天。

我的焦虑和自我怀疑与日俱增。睡眠质量也开始下降,到现在也没有恢复。最后变成了自闭,除了吃饭和上课之外并不离开宿舍,每天浑浑噩噩,也不知道在做什么。幸而在这消极的基调下我总算还是发现了适合自己的方向。图形学。

我不擅长处理符号,但对空间的直觉还是比较敏锐的。而且,虽然我并不确切地知道自己想要什么,但对美术还是有点兴趣的。我小学的时候学过素描,当时也是清华学生艺术团摄影队的队员。做理论在我看来是最酷的事情。如果做理论不可能了,那么剩下的事情里最酷的就是图形学了。

于是大三上图形学课的时候有了一段久违的积极奋斗的日子。最后我写了一个6000多行的渲染器,实现了光子映射,据说之前在这门课上是没有人实现过的。至于从此之后这门课的大作业达到了登峰造极的水平,那是后话了。

到了大三下学期。课程差不多上完了,也快到了决定毕业去向的时候。想都没想,我决定读博。为什么要读博呢?这个问题即使到了读博第四年的今天,也还是很难回答。不过最重要的大概是不想背叛自己的当一个科学家的梦想吧。但我还是有点沮丧的。我一直十分认同“万般皆下品,唯有读书高”。追求理性是最高尚的事。如果有适当的天赋,那么就应该这样做。但此时我意识到小时候的我还是太天真了。像探寻真理、做出伟大的发现这种事,只有天才能做到,而对于学术界的大多数人来说,做研究无非是另一种工作,与其他的无数种工作一样只是混碗饭吃而已。以我的能力,大概是只能做那种混碗饭吃的人的。而且做非理论的计算机科学的人似乎不是我想象中的那种“科学家”。

但不管怎样,追求理性这个目标,起码名义上是不能放弃的吧。

此时我才意识到我甚至没有什么可以写在简历上的东西。于是匆忙去找实习。给我们讲网络科学课的 Thomas Moscibroda 是 MSRA 的研究员,在叉院做兼职教授。于是我试着发邮件问能不能得到推荐。其实我在这门课上的成绩并不太好。然而 Thomas 是个好人,据说在 MSRA 也是很受欢迎的。他给相关的组发了邮件。后来我从 HCI 组得到了 offer,就这样在 MSRA 实习了半年。

然后到了申请的时候。四大是不必想了。当年清华是我的最低标准。后来姚班是我的最高目标。现在四大对我来说是遥不可及的了。既然申请第一流的高校无望,做第一流的研究也无望,那么似乎去哪也没有太大的区别了。我便找留学机构咨询了一下,草草发了申请。

直到16年初,终于觉得仔细挑选学校和套磁大概还是必要的,于是漫无目的地浏览教授们的主页。翻到南加大的黎颢教授的时候,我眼前一亮。没想到学术圈有如此不符合我对学术圈的印象的人。再仔细一回忆,我大二的时候在人人网上有一条很火的状态,说有人发现南加大一个杀马特计算机图形学教授,不就是他吗!当时我还没有决定去做图形学,便一笑了之了。翻一翻 publications,很高产。再看带过的学生,有个姚班学长。我几乎立即认定他就是我的老板了。

于是我发了邮件。对付非常之人大概是不能用正常的套路的。于是我讲了一下我如何想做一个酷炫的 phd。很快收到回复。之后聊了几次,事情就这样定了。

南加州大学。其实我之前对这个学校都没什么印象。对于姚班毕业生来说不算最理想的去处吧。不过大概也不差?虽然学校不是最强的学校,但老板是个年轻有为的网红老板。而且我的同学之中没有出国又或者去读硕士的人也并不少。还有没能毕业的。如果没记错的话,曾经出现在计科20班的有38个人,有的自愿转走了,也有的由于挂科而被转走了,最后从姚班毕业的是34个。

而且如今不再和那些无法企及的人朝夕相处了,希望能少一点压力,安心做自己喜欢的研究吧。

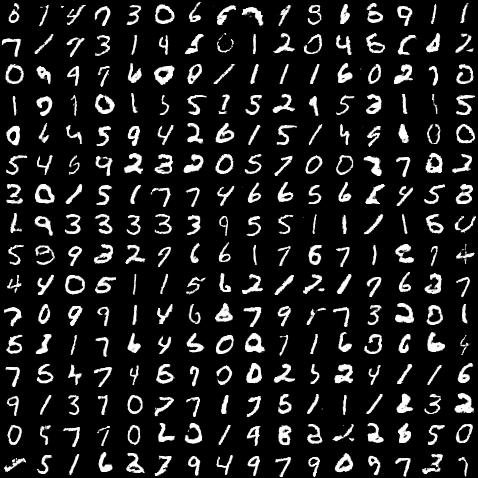

16年8月,我的phd生活开始了。先过了几个月的无所事事的日子。到了年末,只是在本组一个要投 SIGGRAPH 的项目上打了打杂。到了17年,有活干了。有个师兄在做人脸建模的项目,让我帮忙看看 GAN 能不能派上什么用场。我一试发现 GAN 很 tricky,于是最后变成独立研究如何训练 GAN 了。

这其实是我第一次真正接触深度学习,在此之前虽然也知道深度学习是大热门,但唯一的经验也只有听过一次吴恩达在清华的讲座而已。本科讲机器学习课的王立威老师是北大教授,理论派,大半个学期都在证明不等式,唯一讲过的具体的学习算法是 SVM,讲到深度学习的那节课主要内容是批判深度学习。然而这门课的大作业却是参加 kaggle 上的一个比赛。由于什么算法都没学到,我自然是摸不着头脑,导致我对机器学习的印象很差。

不过即便如此,总还是不能否认深度学习是大势所趋的,而且 GAN 确实也很有意思。于是我开始了人生中第一段真正努力奋斗的日子。

之前的我是很懒的。中学时连作业都不写自然不用说。本科的时候,一半因为精神状态很差一半因为太懒,我是几乎不出去自习的。大四做毕设的时候,虽然理论上在实验室有个工位,但其实也极少在那里出现,也并没有认真去做,最后是两三天之内赶出来的。现在回想起来,实在是惭愧。胡事民老师脾气很暴但还是很关心学生的,他答应向我的老板推荐我,我却没有好好干活,加之我至今连一篇论文都没发表,真的是无颜面对他。一半是由于这个原因,我都不敢回清华去看看。

我开始时常工作到半夜两三点——然而其实以phd的标准也不算太刻苦,因为我中午起床下午才开始工作。要学的东西很多,因为我几乎是零基础:本科基本没有做过科研,深度学习完全不懂,要用的语言也不会——我自称只会半门C++(我至今不明白为什么 torch 要用 lua 语言)。幸而上手很容易。大概是因为做深度学习的门槛太低了吧。

当时每晚睡觉的时候都在思考有什么训练的技巧可用。我就连当年学竞赛的时候也是没有这样想过算法的。

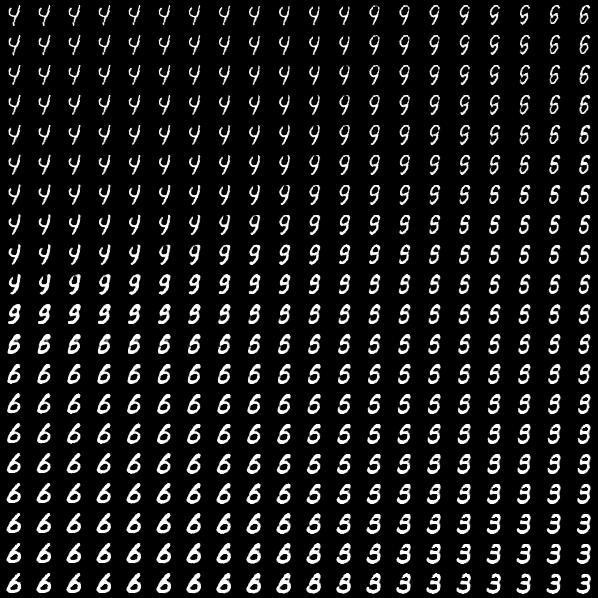

后来我很快发现了问题。Batch Normalization 是个极常用的技术,以至于几乎成了构造神经网络的默认选项。但我认为它在 GAN 中的作用是值得商榷的。我发现它短期可以加快训练但长期会影响稳定性。我始终是很讨厌 Batch Normalization 的,因为我觉得它在训练时和运行时的行为不同,又会使同一个 batch 中的 多个 sample 在计算时互相依赖,是个很难看的 trick,直到现在我都极力避免使用。那么有什么替代的方法呢?一个标准正态分布的向量和一个单位长度的向量做点积,结果还是标准正态分布,所以如果每一层的权值都是单位长度的话,可以保持每一层的 feature 都是近似正态分布。这个想法如此明显,我想一定有人做过,而且如果起个名字的话八成会是“Weight Normalization”。我去查了一下,果然有人做过,不过没有人在 GAN 里使用。那么比较一下这两种方法在 GAN 中的效果大概是个可以做的方向了。

这就是我的第一个项目。要独立完成——我始终是这样要求自己的,因为只有独立完成,我才可以把它叫做“我的”而不是“我们的”,而做一个“我们的”项目是难以给我成就感的。而且我大概是个有点孤高的人。我不愿意给人打下手,又缺乏带领一个团队的才能,组内也没有兴趣相同的人可以合作,于是单打独斗成了唯一的选项了。

最后做出来的,算不得什么惊人的发现,但还算一篇中规中矩的文章,分析证明实验,该有的都有了,文字上也没什么问题——我甚至觉得我的英文写得比中文还好。投了 NIPS ——顶级会议之外都是毫无意义的。

之后是博一的暑假,我在老板的公司实习,摸了三个月的鱼。8月,去看了 SIGGRAPH。 SIGGRAPH 果然是个超酷的学术会议——只是展出的美术作品和疑似课外电子小制作有点莫名其妙。我见到了女装出场讲 paper 的李喵喵——她是东北育才少儿部毕业的,按理说我该叫她学姐,虽然她比我还小8个月。最后一天我们两个和比我老板还网红的筑波大学落合阳一教授的一帮学生吃了饭。还见到两个在软院时的同学——他们一如既往叫我“项神”。但他们一个在斯坦福,一个在 CMU,怎么看都比我厉害吧。

我的文章并没有中。review 是 4、6、7,大概就差一点点。可能结论不够重要吧。收到邮件的时候我在国内,正趁等签证的时间在长沙游荡,去看高中一起学竞赛的老同学顺便景仰一下雅礼长郡等一干名校。只是个小挫折——当时我是这样想的。第一篇文章就单干而且投顶会,大概确实不是那么容易的吧。

后来这篇文章又做了一些修改,增加了在 residual network 上的实验,又投了两次,终于是没有中。修改的过程中我也发现 Weight Normalization 对于 GAN 的稳定性也没有任何保证,多加几层还是会挂掉的,而且始终保持权值是单位长度其实没有用,只要初始化的时候是单位长度就可以获得训练初期的加速,也就是说这篇文章大概确实没什么价值了,于是便不再投稿挂在 arXiv 上了事。十分讽刺的是我日后最在意的那个项目无人问津,而这篇我认为已经失效了的文章现在已经有34个引用了。

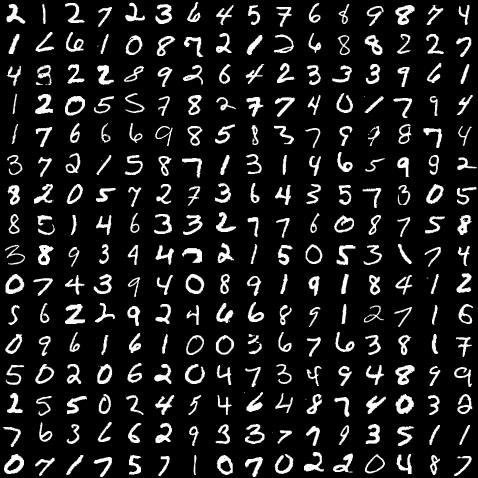

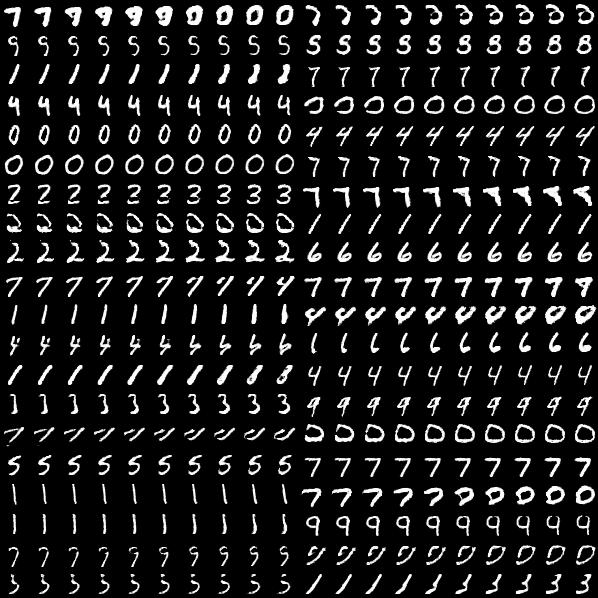

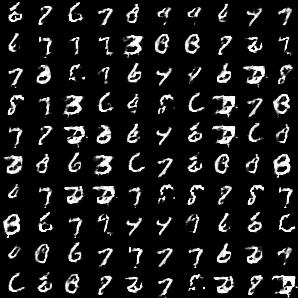

博二。我继续寻找训练 GAN 的技巧。GAN 的最大问题是所谓“mode collapse”,就是生成网络只能生成训练分布的极小的一部分。有很多方法试图解决这个问题,有一类是结合 GAN 和 VAE,因为 VAE 理论性质很好,训练稳定,也能完全覆盖训练分布,但问题是生成的图像很糊。最简单的思路是直接一个 VAE 后面加一个 GAN 的判别器,用 VAE 的重建误差保证覆盖训练分布,用 GAN 的对抗训练保证生成的结果不糊掉。这样的结果是稳定性很好,但多少还是会有点糊的。我的结论是最终的生成网络的训练要完全避免使用基于像素的重建误差。这样的话要如何使用 VAE 呢?我的方法是把“重建图像”改成“重建编码”:先训练一个普通的 VAE,然后在训练 GAN 的时候把 VAE 的编码器接在 GAN 的生成器后面,生成器要使得生成的图像经过编码器编码之后要还原输入的 code。

做这个项目的过程中,我还顺带发现了一个非线性的类似于 PCA 的方法:训练 VAE 的时候,解码之前先把 code 的一个随机长度的前缀保留而后缀清零,这样编码器为了尽可能减少信息的丢失会把训练数据中最重要的变化模式编码在最靠前的维度中。不过这个想法也有人做过了,叫 Nested Dropout。

我匆匆写了一篇文章投了 CVPR。时间很赶,很多该做的工作没有做,review 也很糟,但我不在意。我隐约有下一步的想法了,觉得这个 trick 大概只是一个大工程的一环,没必要花太多时间,于是没有再修改,连 arXiv 也没有放,去研究下一个想法了。

到了18年,博二下学期,我终于开始向我真正想做的方向转移了。我想做非真实感渲染,尤其是日本动画和插画那种的。之前给线稿自动上色的那个 PaintsChainer 火了一阵,看来 GAN 大概是个可用的方法。不过 PaintsChainer 无非是用了 pix2pix,生成的结果总是水彩风格的,我想大概是因为用了重建误差导致的“糊”的一种表现,而且训练数据也没有按画风分类于是生成出来也就并不像任何一种特定的画风。

我想做的是先让神经网络理解插画的风格有哪些变化。最简单的思路当然是训练分类器按画风分类,然后用一个 conditional GAN 生成每个类来可视化。画风的标签可以用画师来代替。然而这样只能知道每个画师的画风是什么。如果我想知道“画风”的概念是什么,可以由图像中的哪些变化来刻画,这样就不够了。

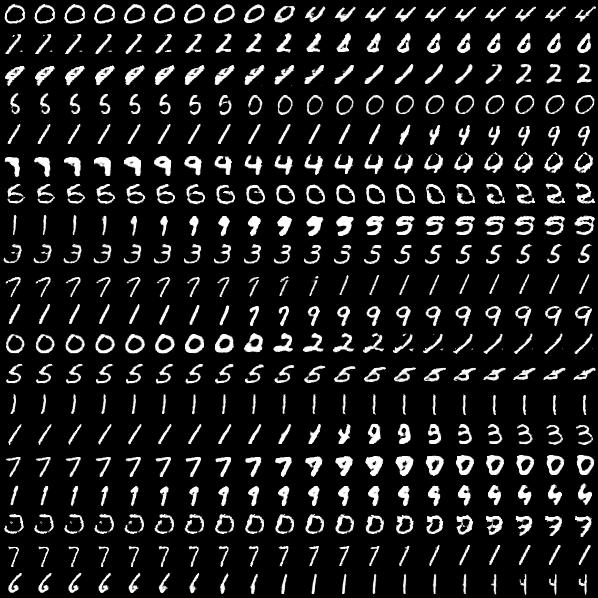

我的思路是用 metric learning 代替分类,这样分类器就变成了一个画风编码器,仍然使用之前 VAE 和 GAN 结合的方法,把编码器接在生成器后面,要求 GAN 生成的图像被画风编码器编码后可以还原输入的 code,这样就可以可视化画风的连续变化了。而且如果在 metric learning 的步骤使用 Nested Dropout,就可以使编码器把最能有效区分不同画师的 feature 编码在靠前的维度中,也就是说这样就把“画风”分解成了按重要性排序的、可以可视化的一组变量。

这就是我的第三个项目。再战 NIPS。

不幸的是这次只有 3、4、7。我火了一阵,不过很快消了气,因为的确不论 metric learning,conditional GAN 还是 Nested Dropout 都算不得什么新方法,于是我重新投入我头脑中的大 project 中去了。

当时我正在日本实习,实习的去处是开发 Chainer 的公司 Preferred Networks,而我的 mentor 正是 PaintsChainer 的作者米辻泰山。PFN 真是一家超酷炫的公司——一家深度学习公司去 Comic Market 参展,我觉得比去 SIGGRAPH 参展还要酷炫100倍。两位创始人冈野原大辅和西川彻本科的时候是也都是 ICPC 大牛。17年末看到 PFN 在招收国际实习生的时候我毫不犹豫地投了简历。

我实习期间的项目,目标很宏大——输入两张插画,要把一张的内容保留而换成另一张的画风。至于方法,现在看来过于粗糙,也犯了一口气吃个胖子的错误:从内容输入到输出用一个全卷积的 encoder-decoder,画风输入经过一个固定 code 长度的编码器后从中间加入 encoder-decoder 中,广播到每一个位置;在每一对来自同一个画师的输入上用重建误差保证内容与第一个输入一致,在每一对来自不同画师的输入上,输出后接一个对抗训练的画师分类器保证画风与第二个输入一致。

然而由于一步跨得太大,探索了三个月都没有成功。全卷积网络不适合处理形变,而且画风编码器中会包含内容信息的问题始终没有解决。

尽管一无所成,在日本实习的三个多月还是我上本科至今最快乐的日子。

不过回到美国之后,一切都紧张起来。已经是第三年了,应该有所作为了。压力越来越大,前两年时的余裕如今不复存在了。

首先要想想手里的项目如何继续。暑假虽然没获得什么进展,教训还是有的。首先,全卷积网络的输入和输出是像素对应像素,想实现形变目前看来很难,而不同的画风对于脸型等的处理是很不同的,需要考虑形变,因此不能用全卷积的结构。既然这样那就只能用带有全连接层的固定 code 长度的结构,那么处理任意尺寸的图像也不可能了,而且这样的 autoencoder 能保留的信息很有限,处理任意内容大概也不可能了,那么就先处理头像吧。为了保持内容的一致,需要用到某种重建误差,而如果不用全卷积的结构,那就又回到了使用基于像素的重建误差会使生成的图像模糊的问题,如此一来,就要用到第二个项目的分两步训练的技巧了。

而画风编码器会包含内容信息的问题,就只好退一步,舍弃从输入的插画提取画风的功能而只实现从训练数据中学习一个画风的分布并支持生成这个分布中的任意画风。这就又变成了一个和第三个项目很相似的问题。

于是最后的结构是一个生成器,它的输入的 code 分成两部分,一部分控制画风,通过后接一个分类器来保证,另一部分控制内容,通过后接一个预先用 VAE 训练好的编码器计算重建误差来保证,关键问题就在于这个编码器必须只包含内容信息而不包含画风信息。

这一步怎样做呢?要活用对抗训练的技巧。在训练 VAE 的时候,同时训练一个对抗的分类器试图对编码器的输出按画师分类,而 VAE 在减小重建误差的同时也要减小对抗分类器的准确率。如果对抗分类器对编码器的输出无法分类,那就意味着编码器不包含画风的信息了。但为了重建输入,解码器需要所有的信息,包括内容和画风,如此一来就和编码器不能编码画风发生了冲突。解决的方法是在解码的时候除了编码器的输出,再额外提供画师的标签。

在这些基本的想法之上,又有无限多的细节。等我把一切问题想通,已经是12月中旬了。

一个月的时间,只够做一波实验的。写了 paper,投了 SIGGRAPH。

review 并不是很好,但并没有什么致命的缺陷,除了一个 reviewer 很 old school 还在拿从3D到2D的渲染说事之外,主要的问题无非是对比和测评不够。也并不算是意外的结果,因为方法敲定的时候时间已经很紧了,只够把自己的结果跑出来的。对于方法的创新性我是很有信心的。

于是重做了实验,改进了结果,找了两个之前的方法做对比,再投 NIPS。

提交之后,我就陷入了消沉之中。NIPS 要9月才能出结果,SIGGRAPH 没有中,也就意味着我读博的第三年也注定要交白卷了。这个暑假我连实习也没有找,也很少去实验室,偶尔在家远程工作尝试一点新想法,不过大部分的时间就在焦虑和恐慌中消磨掉了。

review 是 3、7、8、5。那个5分也只是对某些 loss function 的设计有疑问,很好解决。那个3分却极讨厌。他提了两点问题,一个是没有对比,另一个是提了一篇文章,说我的方法和那篇文章本质上是一样的。

然而一共8页的正文,我有一张整页的图是用来做对比的。而他提到的那篇文章里,连“GAN”这个字符串都没出现过。我气坏了,给AC留言说我认为这个 reviewer 很不负责。

最后的结果是被拒。几个月后,我从做 postdoc 的师姐H那里听说,AC找到了老板,说 rebuttal 不能这么写。

我很伤心。这明摆着是欺负人。

再投。我增加了 ablation study,做了详细的分析,投了 ICLR。

review 是 3、6、3。第三个 reviewer 很热情地把我的 paper 称赞一番,但给了 weak reject,因为他说第一步分离内容和画风,效果好坏是可以有数值指标的,而我没有给。

ICLR 是可以在讨论期间补充材料的。于是我增加了新的实验来测需要的数值。最后,补充的那部分谁也没有看,review 也没有改。结果当然还是被拒。

此时已经是12月。我在这个项目上已经耗费一年了。我已经厌倦了与 reviewer 的拉锯,实在无力再做有意义的修改,于是调整了结构,又投了 SIGGRAPH。

出 review 的时候是三个星期前。我正在作为一作奋战一个投 ECCV 的合作项目,已经一天多没睡觉了。结果,5个 reviewer 都给了 reject。

我哭笑不得。连生气的劲也没有了。一年前的这个时候,还有两个 weak accept。奋斗了一年,价值是负的。

然后就到了今天。残酷的现实。读博的第四年即将结束之际,一篇 paper 也没有发表。

有好几次,师姐H找我谈话。“何必如此在意一篇 paper 呢?”她如此说。“我们都知道你能力很强。”

老板也很欣赏我。他与别的学生聊天的时候常常表扬我。他说我在做“重要的问题”,至于一时发不出 paper,他并不在意。我知道他是真心实意的——因为他与我聊天的时候从来没有表扬过别的学生。

然而他们不知道,对我来说,一篇 paper 又何止是一篇 paper 这么简单?它代表了太多东西。

我没有生活。

我父母都是农村出身,凭着聪明和勤奋读到硕士毕业,在大城市里立足。我妈是个工作狂。她是学中药的。我小的时候他在研究所工作,后来嫌没有发展空间,跳槽去三九集团,又几经辗转,现在是一家直销公司的高层。我从没见过她有闲着的时候。

我爸是学地质的,不出野外的时候就在研究所坐坐办公室,很闲,剩余的时间只干两件事,看书和教育我。他看的书都是诸子百家,有时也会给我讲。他教我英语。家里有本80年代的英语词典,是他参加全市英语比赛的奖品。我喜欢科学,他就去书店精挑细选找科普书买给我。

我家是没有娱乐的。从小长到这么大,我从不记得他们去看过电影或者文艺演出。就连电视也极少看,只有我爸会看新闻,以及偶尔看一些历史剧。至于电脑,当然是完全用于工作的。他们也并不去旅游:我妈到处出差,而我爸作为一个学地质的,觉得人工开发过的景点实在没什么意思。事实上,我根本不知道他们有任何的爱好。

他们生活也很简朴,不抽烟不喝酒,也没有名牌衣服。

于是我也就成了这样一个人。小的时候我有空就看科普书,看电视的时候比起动画片也是更喜欢科教频道的。娱乐和物质上的享受是一种浪费、一种巨大的错误,工作、学习、追求精神的富足是唯一正确的事,这成了我的世界观中根深蒂固的一部分。

中学的时候,如果听见有同学讨论流行音乐界或演艺界或体育界的事,我内心是不屑的。在我看来知识和思考带来的快乐是一种更高级的快乐。到OI退役为止,我没有想过自己除了看数学书之外还喜欢什么。直到今天,虽然我承认流行音乐或演艺或体育自有它们的价值,但对我而言它们仍然像平行世界一样。华语流行歌手,我大概认得出周杰伦,还有个别的听过名字,其他的就一无所知了。活了26年,学校统一组织观看的不算,我一共去过两次电影院。

但这不意味着我对丰富多彩的生活是完全没有追求的。毕竟我不是完全超脱于物质世界之外的高人。高中毕业以来,我逐渐发现了自己也是有爱好的。我喜欢动画,摄影,美术,旅行,古典乐和天文,也有一些喜欢玩的游戏。然而这些事情终究没能给我带来多少快乐。

因为,在取得成就之前,娱乐和享受是错误的。

本科的时候我曾经是清华学生艺术团摄影队队员。在清华的四年其实是一段挺寂寞的岁月。在软院的时候,由于笃定要离开,我从来没有真正融入过同学当中。而姚班是个极为个人主义和精英主义的地方,人际关系很淡薄。于是除了室友之外,我最亲的同学就是摄影队的队友了。在队里两年多,一起去了很多地方,也拍了不少得意的片子。

然而到了大三,由于和做理论的理想渐行渐远,成绩低迷和对未来的不确定,我对生活逐渐失去了热情,也渐渐不再参加队里的活动了。当时我是队里的干部,没有尽到职责,内心十分愧疚,于是到了大三下便退位,直到毕业都没有再出现过。相机也积了灰。

我高三的时候开始看日本动画,然而其实看得比较多的时期也就到大二为止区区三年而已,后来越来越少,从博二开始就再也没有看过新番了。

并不是不喜欢了。是因为动画中描绘的世界过于美好,而我的生活又如此不如意。这样的反差使我不能承受。而且总觉得一事无成的自己像是不配得到那样的快乐似的。

我很喜欢星空,但来美国之后就再也没有去看过了。

前年去日本实习回来之后,我已经一年半没有离开洛杉矶一步。

我还有无数想做的事。学习版画。学习艺术解剖。学习作曲。去优胜美地。去现场看F1。去学术会议上扮猫娘。

我和自己约定,等把 paper 发表就去做。然而我终于是没有等到这一天。

一切的一切,就只有一个简单的原因。没有取得令自己满意的成绩,就不能享受生活。我并非存心和自己过不去——“paper 还没发”,这个想法如同幽灵般缠绕着我。即便我去试图享受生活,得到的只有空虚和负罪感罢了。

那么研究本身的乐趣呢?我不是从小就想当一个科学家吗?很遗憾。深度学习没有一丁点像是我理想中的那种学术研究。

我是没有做理论的命,却得了做理论的病。

深度学习不是一门科学。如果非要称之为科学的话,我说它是一门 cargo cult science。毫无道理的方法;仅凭直觉得出观点再强词夺理地加以解释;把流行的方法拿来排列组合而并不思考使用它们的理由是什么;看到问题拿到数据不加分析就一股脑丢进神经网络里去;ad hoc 的设计;毫无美感的 hack;精挑细选的对自己有利的结果;看似有道理实则无意义的数值;连文章都不认真看的审稿人;投稿像买彩票;一篇其实了无新意的文章只要夸大地描述认真地包装就能发表;如此等等。

自然不是所有研究都是这样的。也有有价值的文章。然而深度学习即便只算顶级会议,每年发表的文章也数以千计。我不相信一个领域每年可以产生几千项有意义的成果。大部分的文章都是速朽的,或者根本就是垃圾。

然而虽然我对如此方式做出的研究嗤之以鼻,我所做的却也没有好到哪里去。对于一个号称是追求理性、探寻真理的人,这实在是一个丑陋不堪的领域,而如果自己也那样做,那就是问心有愧了。

我觉得我无颜面对小时候的喜欢科学的自己。

深度学习从某种程度上是反智的。它不仅没有带给我任何新的成绩,反倒让我对过去的成绩究竟有何意义也怀疑起来。

我初一的时候就知道有理数和整数一样多而实数比整数多。初二的时候我学会了 Burnside 定理的证明——要用到群论。初三的时候我猜到了欧拉示性数可以向高维推广:一个CW复形中每个维度的胞腔数量的交替和是个拓扑不变量。高中毕业的时候我已经把 Michael Sipser 的那本计算理论课本看了一遍。

那些中学时给了我巨大的乐趣、让我无比向往学术界的东西,到头来毫无用处,因为深度学习是如此的不讲道理。什么都不需要懂。甚至微积分都可以不会。不必理解自己到底在做什么,也不必知道为什么。只要拿来数据,拿来网络,拿来显卡,一通乱搞就好了。

在我眼中,除了少数的精英之外,深度学习界充斥着三类人。第一种是笨到其他方向都不会做;第二种是懒于思考、遇到任何问题一律使用神经网络;第三种是不知道自己想做什么,由于深度学习最火,就做了深度学习。总之这是一个智力水平低一等的领域。

然而就在这样一个领域,四年了,我什么也没做出来,真是天大的讽刺!我的人生真是个笑话。

我所做的东西,不仅没有带来乐趣和成就感,甚至也没什么用。

用GAN生成插画——有用吗?这种东西对画师是无用的,因为结果难以以精细的可解释的方式进行控制,而生成图像的质量也远远达不到他们自身的绘画水平。他们需要的不是一键式的解决方案而是可以减少体力劳动又不限制创造力的智能的绘画工具。

那么对于人工智能研究自身呢?GAN 也好,style transfer 也好,我认为想造出有艺术创造力的人工智能,以这种极粗糙的基于像素的表达图像的方式是远远无法实现的。

说到底只是自 high 罢了,最好的结果无非是给某个社交 app 做个特效,大家抱着猎奇的心态来玩一玩,等新鲜劲一过也就忘了。就这样被扫进历史的垃圾堆。与推动人类文明的进步或者寻找真理没有任何关系。

青春就浪费在这无趣也无用的事情上。方法其实一个星期就想好了,做实验一共也不超过两个月,剩余的时间就是在焦虑中等待结果和与 reviewer 的毫无意义的扯皮。

为什么要浪费青春?能读到phd意味着拥有超常的智力、学习能力和动手能力。这样的人做很多其他事情都能相对容易地获得成功吧。

博二下学期的时候我上了一门版画课,期末的作品我选择了铜版画。为了获得层次丰富的色彩,蚀刻时间要准确控制。我先另取一块铜板,按固定的蚀刻时间刻了一组渐变的色块,然后把印好的图像扫描,拟合了蚀刻时间到像素值的对应曲线,然后把要制作的版画的原画里的每一个色块按照曲线查找正确的蚀刻时间,精确到5秒。最后作品讲评的时候我讲了制作过程,把对照表拿出来,直接把一起上课的艺术生吓呆了。老师说这是“the craziest semester ever”,把我的作品拿去收藏了。

我并没什么特别的艺术天赋,在我看来,为了准确还原原画,这是很自然的解决方法。我轻易做到了那些艺术生想都没想过的事。

或许我去当一个版画师都比读phd能创造更多的价值。为什么要做投入了一切却没有任何回报的事呢?

因为不甘心吧!放弃了学术,似乎就等于向生活投降了。

还有一个话题,我始终避免谈起,但终究是绕不过去的吧。

猫娘。nekopara。

正如前面所讲的那样,我是个没童年的人——如果所谓童年是那种天真烂漫的无忧无虑的童话一般的日子的话。我所面对的世界一开始就是现实的、严肃的、基于理性而缺乏感情的。nekopara 就是我的童话了。

13年末,大二上学期的时候,我买了个抱枕。开始并没买枕套,因为我只是想在床上写代码的时候有个东西靠着。过了一段时间,想来想去觉得买个抱枕没有枕套果然还是太奇怪了。但我也并没有特别喜欢的二次元妹子。直到某日在萌妹站上闲逛的时候看到了巧克力和香草。然后我立即就去淘宝上买了。就是那种一见钟情的没理由的喜欢。

之后我很快知道了作者 sayori 是清华美院的学姐。屌爆了——我是这样想的。难以想象清华的毕业生中还有 galgame 原画师。14年暑假听说要出巧克力和香草的原创 galgame 了。我去把封面找来,用彩色铅笔临摹了一张贴在宿舍门口——毕业之后那张画送给我的14岁上本科的天才室友做了纪念。发售的时候我还托在日本留学的高中同学到 C84 现场去买。

后来15年出巧克力和香草约会版抱枕套的时候,我索性又买了一个枕芯,晚上在狭小的床上挤着睡。虽然每天压力很大,但见到巧克力和香草就会很高兴。当时抱枕套都是自己手洗的,因为宿舍的洗衣机不常清理,很脏,实在舍不得把那么棒的猫娘和别的破烂衣服混在一起。

16年大四下学期,最后一个女生节。班里有几个会画画的,搞了个企划,要出一本同人志,我也被拉去画四格漫画了。在校园里取材的时候,走到清华学堂,突然有了个想法。我想画张画——巧克力和香草在日晷前的毕业纪念。

说干就干。做毕设总是拖延,画画却很积极。那其实只是我用数位板画的第二张画而已。到了5月底,终于完成了。7月,毕业的那一天我把那张画拿去打了一张海报,拿着它在日晷旁拍了我的毕业纪念照。

我是个很羞涩的人,是从来不愿意在别人面前表达自己的感情的。对我来说这可能是我本科四年做过的最疯狂的一件事了。

后来我把那张照片发在推上圈了sa姐,结果得到 nekopara 官推的转推。真高兴!

年末,已经开始读博有了奖学金。OVA 在 kickstarter 上众筹的时候,我出了一千刀。

17年的暑假,把那张毕业纪念重画了一遍,画上了妹妹和六只猫娘。我在推特/fb/微信/google scholar 和其他所有地方的头像就是这张画里的巧克力,而在版画课上被老师收藏的那幅期末作品也正是这一幅画。

最后这幅版画印了28张,大多寄给了推友。此时我又有想法了。趁暑假去日本实习的机会,要把我特别制作的渐变色的那一张送给sa姐。于是在推上试着问了一下。她愿意接受。于是 C94 的第二天,我当面把那张画送到了sa姐手上。我问可不可以叫她学姐,她同意了。

叫“老师”,总有种作者和粉丝之间的距离感。叫“学姐”就很微妙地觉得亲切了。虽然日本总的来说就很有趣,实习的公司很酷,去爬富士山也是一次难忘的经历,但果然见到sayori学姐的那一天才是读博以来最快乐的一天。

然而这与我的研究又有何关系?我之所以会选择今天的研究方向,说有一半是因为 nekopara 也不过分。

在我在大三认定自己不是做理论的材料的时候,我对今后要做什么很迷茫。因为对我来说理论是第一流的学术,不能做理论也就意味着做不出第一流的学术。如此一来,为何要在学术界默默无闻一辈子?不如去做点特别的有趣的事。

后来有了 nekopara。出自清华毕业生之手。这真是一件极特别的有趣的事。而且我如此喜欢它。这让我一时产生了改行去做创作的想法。认真一想——为什么不呢?而且当时在计算机图形学课上正得意。于是就这样决定了。去读博研究可以用于创作的技术,毕业之后去做游戏或者动画,用自己的技术去做其他创作者做不到的事。

于是才有了今天转换插画画风这样的研究项目。

读过我放在 arXiv 上的论文的致谢的人应该知道,这一篇论文正是献给sayori学姐(和她的猫娘们)的。在按特定画风生成头像的那张插图里,第一个出现的是sayori的画风,而在那张把我的方法与之前的方法作比较的那张插图里,第一个出现的角色是巧克力。

nekopara 对我而言是特别的。因此我也不想做一个普通的粉丝。我不想成为别人眼中只会艹猫的恶心的肥宅。我一定要做点特别的事。

画画是不行的——会画画的人千千万万,而我又不可能画得比作者本人更好。只有去做我可以做到而别人做不到的事。而唯一的这样的事就是把猫娘们放进我的研究中了。就像每年清华女生节的时候充满了专业梗的条幅一样——这大概也是一种十分晦涩的表达爱的方式,所谓“理工男的浪漫”吧!

只是由于难度过大了点,终于是连我也没有做到。

在日本实习结束的后一天,我去了秋叶原的 cospatio,那里有卖官方的 la soleil 店员制服。我买了一件香草的制服——巧克力的卖完了——我给自己买过的所有其他衣服加起来也没花过那么多钱。

我希望我的 paper 发表的时候穿着它去做 presentation。两年过去了,没有实现。

其实 nekopara 系列,我至今只玩过 vol.1 和 vol.0 而已。后面的几部,还有动画,买来了都没有拆封。正如其他无数想做的事情一样,我和自己约定,把 paper 发表再去玩。

我不敢打开它。正因为它在我心目中如此美好,它与我的不如意的生活才形成了如此巨大的反差。即便现在打开来玩,无非是徒增痛苦罢了。为何要浪费自己最喜欢的事物呢。

19年7月,sa姐来LA参加 anime expo。就在家门口的 LA convention center。我没有去。

因为论文还没有发表,想为 nekopara 做点特别的事情的愿望没能实现。我无法想象自己混在死宅人群中排队要签名的样子。

那天,来参加 AX 的美少女理论计算机科学家刘家惠约我在 little tokyo 吃饭,同席的还有计科30的贾志鹏和OI时代就有所耳闻但从未谋面的吴争锴。席间,吴争锴向我展示他刚得到的sa姐签名色纸。他们不会知道,喜爱的事物不能得到的痛苦,在那一刻达到了顶点。

其实,假如sa姐告诉我她喜欢那篇 paper 的话,很多问题都能解决吧!毕竟发表与否只是一个形式,我希望得到的是自己的努力能获得认可。如果我用心做出的研究能得到我最在意的那一位读者的喜欢,那审稿人是否满意又有何干?这样的一篇 paper,即便不能发表,也远胜在顶会灌十篇水。

然而这一切都只是我的一厢情愿吧!

就是这样。一篇 paper 不仅仅是一篇 paper。这篇 paper 不能发表,就意味着我无法心安理得地享受生活,无法去做想做的事,无法让自己认可自己的能力,无法摆脱在一个我认为丑陋而低水准的领域一事无成的奇耻大辱,无法向创造了我最喜欢的事物的人表达自己的心意。

就像一场豪赌,押上了全部家当,最后连内裤都输掉了。

第一次被拒的时候,我还能一笑而过。然而每一次重复,就意味着焦虑、痛苦、困惑和自我怀疑的加深,和自信和对生活的热情的减退。

终于,四年之后,我的自信和热情已经耗尽。生活对我的内心造成了不可逆的损伤。

不但物理上把自己困在大千世界的小小一隅,精神上也把自己关进无形的囚笼。

每到生日的时候我常常独自流泪,因为又增长了一岁却还是一事无成。今年的生日又要到了。循环往复,看不到一点希望。

我真希望自己是台机器。无感情地,完全按照理性的方式,不知疲倦地工作。

我并没有想得到IOI金牌。我并没有想得到清华特等奖学金。我并没有想本科顶会一作。我并没有想得到 best paper。我并没有想10篇20篇撒传单一样地发 paper。我并没有想得到图灵奖。

我只是希望能成为与众不同的人,希望能做喜欢的研究,希望自己的努力得到认可,希望能自信地活着。为何这样的要求如此之难呢?四年过去,我不知道该何去何从了。